"I Survived. I Lived. Then I Woke Up."

Pokémon Go Players Built a Robot Navigation System — And Most of Them Had No Idea

Niantic used 30 billion Pokémon Go scans to build a centimeter-accurate AI map now guiding real-world robots. Here's exactly how they did it — and what you agreed to.

Christopher J

3/24/20267 min read

Pokémon Go Players Built a Robot Navigation System — And Most of Them Had No Idea

You thought you were catching Pikachu. You were actually mapping the planet.

Over the past decade, Niantic quietly turned millions of Pokémon Go players into the most productive — and most unknowing — cartographers in human history. The result? A 30-billion-image visual database so precise that delivery robots now use it to navigate city sidewalks with centimeter-level accuracy. GPS can't touch it.

This is the story of how a mobile game became the backbone of next-generation robotics infrastructure.

The World's Most Elaborate Side Quest

Niantic didn't build a map. They crowd-sourced one — at planetary scale.

Every time a player tapped a PokéStop and completed an AR scan "mission," they were recording a geotagged video of a real-world location: a statue, a storefront, a park entrance. Each frame came loaded with metadata — camera angle, movement data, GPS coordinates — creating what engineers call "posed images." Perfect raw material for building 3D models of the physical world.

Over ten years, across millions of players, in hundreds of cities, those scans stacked up. Thirty billion images and counting. Niantic didn't need satellites or survey crews. They had us.

How Niantic Pulled It Off

The Scan, the Snap, the Stack

The data collection pipeline was built directly into the game loop. Players at level 20 and above could accept optional scan tasks — recording 20-to-30-second walkaround videos of PokéStops or Gyms in varying lighting and weather conditions. The app incentivized it with in-game rewards.

What players experienced as a quick AR mini-game was, in engineering terms, a multi-angle photogrammetric capture session. Popular locations were scanned repeatedly across seasons, keeping the models current as storefronts changed and cities evolved.

From Selfies to 3D Cities

Once the images hit Niantic's servers, algorithms took over.

Using techniques called Structure-from-Motion and SLAM (Simultaneous Localization and Mapping), overlapping 2D frames are triangulated into 3D geometry. Billions of those reconstructions were then fused into what Niantic now calls the Large Geospatial Model (LGM) — a shared spatial "brain" that doesn't just store images but understands them. It can label roads, buildings, and obstacles. It can predict what a location should look like from an angle it's never directly seen, extrapolating geometry the way a person can guess what the back of a church looks like after only seeing the front.

The LGM trains across more than 150 trillion neural network parameters. For context: that's not a map. That's a model of how the world looks.

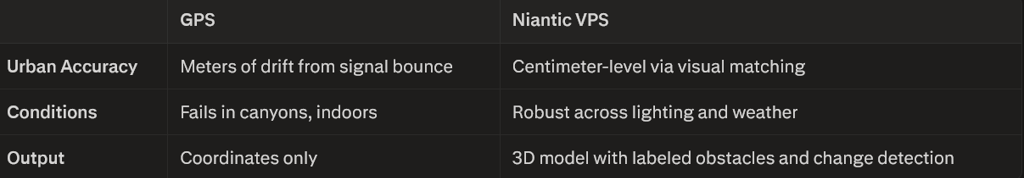

GPS vs. VPS: No Contest in the City

GPS is great — until you're surrounded by skyscrapers. In dense urban environments, satellite signals bounce off buildings and produce positional drift of several meters. That's the difference between a delivery robot arriving at your door and arriving at your neighbor's.

Niantic's Visual Positioning System (VPS) solves this by doing what humans naturally do: recognizing a place by looking at it.

VPS works by comparing a live camera feed — from a robot, phone, or AR device — against the LGM's reference database in real time. The system returns not just location, but full orientation: position plus heading in 3 to 6 degrees of freedom, globally, from a sidewalk in Manhattan to a shopping district in Tokyo.

The Business Pivot Nobody Saw Coming

Niantic built a game company. Then they realized they'd accidentally built something more valuable than any game.

In a move that mirrors Google's evolution from search engine to mapping infrastructure, Niantic spun off Niantic Spatial to license its VPS and LGM technology to robotics companies, logistics firms, defense contractors, and industrial operators. Their first high-profile partner: Coco Robotics, whose sidewalk delivery bots now navigate urban environments using Niantic's spatial intelligence.

The long-term vision is bigger. By end of 2026, Niantic plans to integrate the LGM with Large Language Models and World Foundation Models — creating a shared spatial reasoning layer for fleets of robots, drones, and AR glasses operating simultaneously in the real world. Think of it as AWS for physical space: infrastructure that any machine can query to answer the two most fundamental questions a robot can ask — Where am I? and What do I see?

Enterprises use it for digital twins and facility inspections. Public sector operations deploy it for GPS-denied navigation in tunnels and dense corridors. Predictive analytics, crowd-flow modeling, and universal spatial APIs are already on the roadmap.

The Privacy Question You're Already Asking

Here's where things get complicated.

What Niantic Actually Told You

Technically, Niantic disclosed all of this. Their Terms of Service and Privacy Policy state clearly that AR scan content — photos, videos, and 3D scans captured through the app — grants Niantic a non-exclusive, transferable, sublicensable, worldwide, royalty-free, perpetual license to use, modify, and build derivative products from your contributions. That includes sharing rights with third-party partners.

In-app, AR scan tasks were presented as optional missions with pre-upload explanations that framed participation as contributing to "AR mapping and location-based technology."

What Most Players Heard

None of that.

Not because it wasn't in the fine print — it was — but because a 20-second walkaround of a statue feels like a game mechanic, not a multi-billion-image AI training contribution. The scale (30+ billion images), the end use (robot navigation systems and commercial AI infrastructure), and the timeline (a decade of continuous collection) were never part of the in-app experience. They were buried in documents most players never opened.

The result sits squarely in a now-familiar gray zone: technically disclosed, practically invisible. Users can opt out of future scans in some cases, but images already processed cannot be easily deleted or recalled — the model has already learned from them.

This isn't unique to Niantic. It's the standard architecture of the modern data economy: consent buried in agreements, value extracted at scale, users rewarded with game points while the real asset compounds in a server farm.

What Comes Next

The LGM isn't finished. It's accelerating.

Niantic's roadmap calls for multisensor fusion — ingesting data from phones, drones, satellites, and ground robots into unified edge-and-cloud pipelines. The output evolves beyond static maps into living models: persistent, continuously updated 3D reconstructions of cities that change in real time as the world changes around them.

The services built on top expand beyond localization into semantics (what objects are where), visualization (immersive planning tools), and inference (detecting changes, planning paths, predicting flows). Billions of devices contributing data in continuous capture-query loops. A living map for a world full of machines that need to see.

Pokémon Go was the onboarding mechanism. The real product was always the map.

KEY TAKEAWAYS

Niantic collected over 30 billion geotagged images from Pokémon Go AR scans over a decade, building one of the world's largest real-world visual datasets

These images trained the Large Geospatial Model (LGM) — a neural map capable of centimeter-level positioning, 3D reconstruction, and obstacle labeling

The Visual Positioning System (VPS) built on this data dramatically outperforms GPS in urban environments, providing position and orientation in up to 6 degrees of freedom

Niantic has pivoted from gaming company to spatial infrastructure provider, licensing VPS/LGM to robotics, logistics, and defense via Niantic Spatial

Players technically consented via ToS, but the scale and commercial end-use of their contributions were not practically communicated — a growing pattern in the data economy

The LGM is on track to integrate with LLMs and World Foundation Models by end of 2026, becoming a shared spatial intelligence layer for autonomous fleets

The dataset competes with Street View-style services but offers a critical advantage: ground-level, human-perspective, dynamically updated captures — exactly what robots navigating human environments need

FAQs

Q: Did Pokémon Go players consent to their scans being used for robots?

A: Technically yes — Niantic's Terms of Service grant them a perpetual, sublicensable license to use and commercialize AR scan data. Practically, most players had no awareness their contributions were feeding a commercial AI system at this scale. It was disclosed; it just wasn't communicated.

Q: How accurate is Niantic's VPS compared to standard GPS?

A: Standard GPS drifts by several meters in dense urban areas due to signal multipath from buildings. Niantic's VPS achieves centimeter-level accuracy by visually matching live camera feeds to its reference database, outputting full 3D position and orientation rather than coordinates alone.

Q: What is the Large Geospatial Model (LGM) and how does it work?

A: The LGM is a neural model trained on 30+ billion posed images from Pokémon Go scans and other sources. It uses techniques like Structure-from-Motion and SLAM to reconstruct 3D environments, then compresses this into neural representations that machines can query for localization, semantic labeling, and change detection in real time.

Q: Who is Niantic Spatial selling this technology to?

A: Current clients include Coco Robotics for sidewalk delivery navigation. The broader market includes logistics, defense (GPS-denied navigation), industrial operations (digital twins, facility inspection), and AR device manufacturers. The long-term positioning is infrastructure licensing — spatial computing's equivalent of cloud services.

Q: Can players opt out or delete their scan data?

A: Players can opt out of future AR scan contributions through in-app and account settings. However, images already processed into the LGM cannot be easily deleted, once a scan is ingested and used for training, unwinding that contribution from a neural model isn't technically straightforward.

CALL TO ACTION

The line between "playing a game" and "contributing to a global AI system" has been invisible for years — and this story makes it impossible to ignore. If you found this breakdown useful, share it with someone who still thinks Pokémon Go is just a game.

And if you want more breakdowns on how tech companies turn your everyday behavior into billion-dollar infrastructure — without making it obvious

drop a comment below or join the list. The next one is already being built.

Do you have a life changing story and want to help others with your experience and inspiration. Please DM me or Send me an email at the links below

Contact Me

© 2025. All rights reserved.

Privacy Policy